Schooling, Education and AI

As with other manifestations of AI, the choice to ignore it is simply not there. You can embrace it or you can reject it, but choose the latter knowing that the vast majority of people will embrace it, so you are putting yourself and your progeny at a disadvantage. Of course, that doesn’t mean you shouldn’t reject it. Maybe you should. Just be aware.

With that caveat acknowledged, we have to look at what AI will enable us - or other people - to do. Even those of us who reject it need to understand how it will benefit others, since ultimately we are “up against” them.

First, I should explain my bias. While I loved primary school, secondary (high) school was a torment for me. With class teaching that was frustratingly slow and bureaucratic demands that were overly pedantic, it felt like a huge waste of my time. If there is a way to avoid thwarting the tremendous energy and drive that teenaged boys have, then I am biased to approve of it. Likewise if there is a way to free kids from the suffocating syllabi of formal education.

I might have been an extreme case but I think most kids, especially boys, have similar experiences of high school. The older I get, the more I am disturbed by the idea of forcing highly energetic youngsters to sit still for eight hours a day in dull classrooms learning dull stuff. The superficial result is demotivation and disengagement, but it feels like there is wastage at a more profound level. We seem to be stifling our people when they are at their most mentally fertile.

Schooling takes far too long - thirteen years to get someone ready for work? Also, at the high school level, most of what you learn is irrelevant once you leave. We have long spoken of this as a sort of joke, something that just can’t be fixed, but it can be fixed, and we should not cheerfully accept an inherent flaw in a system that could be changed. In seeking to heal its dysfunction, Western society is going to have to ask why our schooling takes so long, why it includes many things that aren’t needed or enriching for the individual child, and why these things are often taught badly.

All of this was probably inevitable given the way education developed. In expanding schooling to the many, it was thought right to echo the classical education by teaching all children about Hamlet (for example), but as the West became ever more bureaucratised and egalitarian, “teaching them about Hamlet” became “training them to repeat ten facts about Hamlet”. By now, even many of the teachers don’t have any real grasp of Hamlet; they just know that each kid needs to go through the motions, as they did, in order to get the grade. Democratising means homogenising, which especially in the context of the Humanities makes the exercise basically a farce. It is brought about by the need to serve a system that has taken on a life of its own; the process has become “the point”.

So, for most kids, satisfying the process is the real experience of high school. They just want to get by and get out. They will learn whatever needs learning in order to pass the tests. Then they go to college or university where the process is repeated in a more focussed form. Then, finally, they are out in the world of work, where they will occasionally have to do courses in order to “stay fresh” with the latest thinking, techniques and tools.

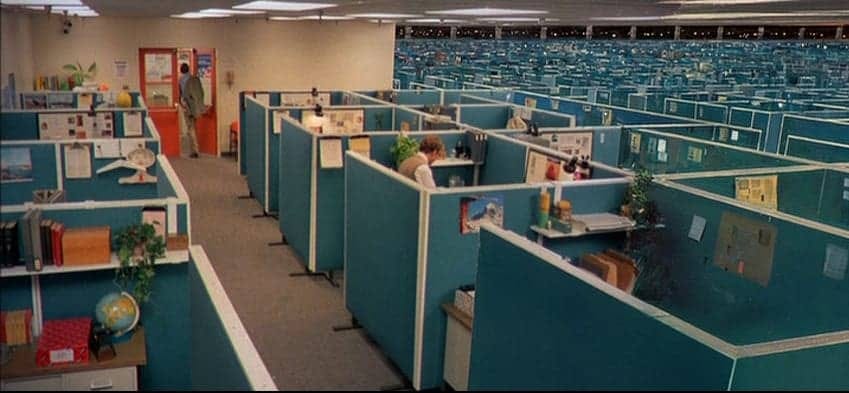

For a long time, then, our conception of education has been more akin to skills training than to classical “education”. Moreover it has become routine and impersonal, like software installation. We install the software, we make sure it has installed correctly, then we periodically install small updates as they become available.

The spread of first schooling and then university led to this new conception of education. We ceased speaking - and thinking - of “the formation of character”. Certainly, we stopped thinking of “bringing out what is within”. It became the opposite: the inserting and forcing and grafting of the authorised into the raw mind, to tame it, to ensure it is fit for purpose in a society that was highly regimented and demanded conformity of its messy organic drones.

This was education in a world that was semi-automated, that required people to interface with a pedantic and inflexible infrastructure. You achieve that by making the infrastructure more human or the people less so. We did both: the infrastructure became easier to operate, but operating it at such intensity required people to act like machines.

But in the era of AI, the infrastructure is not only becoming more versatile, it is becoming able to actually communicate with us. The White call-centre worker of the late 1990s was replaced with an Indian by the late 2000s, then by barely-functional speech recognition software by the late 2010s, but that is now being replaced by AI with which you can actually have an easy conversation. You tell it what you want, it asks you for the information it needs, and the job is done. You nearly say thank you, and when it wishes you a good day you have to remind yourself not to say the same back, then you hang up. It is “impersonal” in a way we could scarcely have imagined in the 1990s… but it works, and it works better than the clumsy “intermediary versions” of the 2000s and 2010s. The automation is complete - the system can now converse with us - so we no longer need humans in the middle, acting like machines.

That eliminates a lot of jobs - the “operatives”, the data entry people, the management layers above them… we don’t need a middle-class to operate the system any more. We also don’t need them to do many jobs that the system itself can now do. From data analysis to graphic design, it is becoming difficult to work out what we do need a middle-class for. We still need a working-class for the manual tasks that robotics can’t take over, but not many. Of course, at this point you have to ask the more existential question of what a society is for if it doesn’t “need” most of the people in it, but that is beyond the scope of this essay.

In terms of employment sectors, until the last few years it might have seemed that education was a safe option, but AI nixes that too. Who needs a teacher or even a highly-trained professor when you can just ask ChatGPT?

This tweet sums it up. If a student can simply get ChatGPT to write essays for him, then his degree has little value to employers since it no longer “proves” what it used to. Similarly, if a student can simply get ChatGPT to explain things for him, then attending university is absurd since he can acquire the same understanding without racking up huge debt and wasting three years of his life. If we have divested education of “higher purposes” and it is now purely for training people, then a more efficient training method renders it obsolete - a grossly expensive farce, justified only by residual social cachet that is depleting daily.

I can imagine a (very) near future in which a company tells 12 year-olds how to join it with a simple page on its website:

Learn X, Y and Z thoroughly. Ask AI whatever questions you want in order to develop your understanding of those and related concepts. Once you are 16, you may join a group of candidates sitting an exam here on our premises. Electronic devices will be forbidden so you will be relying purely on your knowledge and understanding. The exam will determine the extent to which you have mastered X, Y, Z and related subjects (which we won’t specify, so as to gauge your initiative and engagement). The best ten of you will get employed.

Not only is university not required for this, even high school isn’t. As for the exam at the end, that would be invigilated by people who actually work at the company and thus “know the territory”, so exam boards would also be superfluous. The entire 20th Century system of formal education would be bypassed.

Now, once you (as this hypothetical 12 year-old) know which subjects you need to master in order to be eligible for that job, how will you learn them? How will “AI training” work?

You tell the AI that you need to master subjects X, Y and Z within the next four years. It determines what mastering those will entail. For example, like any subject, X can only be grasped by first grasping a hierarchy of other things. The AI will guide you through that hierarchy, step by step.

It will estimate the amount of time required to master X, Y and Z so that you make the deadline. It will revise this estimate periodically based on its perception of your progress so far.

It will gauge your progress and your mastery of particular things by devising tests for you to sit. These could be generated automatically but perhaps posted online to be checked by human experts before deploying. Eventually there will be a huge stockpile of such tests so no danger of scarcity. The software will, of course, keep track of which tests you have already sat.

You will learn at your own pace, unhampered by slower students.

You will be constantly engaged rather than waiting for the lecturer to get to the point, being distracted by social hijinks, or getting bored learning things you already understand or have no use for.

If there is something you don’t understand you will be able to get clarification immediately without having to worry about “looking stupid”.

On “downtime” you can watch relevant YouTube lectures by real people and enjoy a sample of the old style of university. You can chat about the subject with other interested people online. Such activities will yield random “bits” to serve as fuel, new questions for you to ask the AI.

If your curiosity takes you away from the X, Y and Z required by the original company, you can ask the AI which jobs at which companies would better fit the knowledgebase you have been gathering.

The AI can (if you choose) make your knowledgebase publicly searchable so that people looking for employees or research partners will be able to find you. This profile will be very precise, and it will update automatically as your interests evolve. Maybe the company still requires X, Y and Z but has developed a special need for people who also know about P and Q - and you happen to fit their needs so will be contacted.

Now, you might think all of that sounds dreadfully impersonal. I wouldn’t disagree. But it might well be what many, many people do. Your kid could spend six years at high school then another three at university before applying to begin his career at 21, but many of his peers will be there at 16, possibly with more knowledge and better understanding than he acquired in-between all the tedious lectures, crap food, drinking, shagging, and student activism. They will have a five-year lead over him and zero debt.

Note that this would apply not only to “hard theory” subjects like STEM but even to the Humanities, because they, once the heart of the classical education, have been degraded into pure exercises in memory, facts, statistics and unquestioning alignment with ideology. Conformity and shrill certainty are required. Contemplation is certainly not required (indeed it is discouraged and punished) in order to obtain a Humanities degree today, or probably for the last fifty years. So AI could take over teaching this material, so degraded has it been by its human conveyors since 1945. They have made themselves redundant by so devaluing the precious thing they were trusted to look after. Now a machine can do it - and a machine doesn’t want a salary and a pension.

At the moment there are shortcomings with AI training. Studies are coming out saying that it lowers IQ because, of course, people are allowing it to do their thinking for them. I believe this is about as much of an intractable problem as the “uncanny valley” feel of AI imaging: it will be solved, and probably quickly.

For example, I can imagine an AI training system that requires the student to demonstrate understanding. I can imagine a verified AI testing system that gauges a student’s progress in a way that cannot be faked. These are problems waiting for solutions that will materialise.

Within a decade from now, AI might well be better than the ways we currently have for training, especially in subjects that don’t require physical skills or activity (although even there it could be a useful aid).

Ironically, the kind of education it will be terrible at is the type we have spent a century moving away from.

Understanding life, history, philosophy, art, men, women and oneself requires interaction with human educators. It is not just a matter of IQ, so no matter how “intelligent” AI becomes, it can never do this. It is a matter of, for want of a less sentimental word, humanity. I don’t want to learn from software what it is to be human, to fall in love, to lose, to be bereaved, to be jealous, to fail, to win, to endure, to hope. It couldn’t possibly understand, so how it could help me understand? Why would I trust it? I don’t want to discuss with software the meaning or potency of a film or a piece of music. The idea is ridiculous.

But I think this kind of education - the Humanities as they used to be taught - is of value to only a minority of people, 10% or less. (Most fans of Withnail & I don’t want to think about its reflections on friendship, class, nationhood… they just want to play the drinking game and laugh about thumbs going weird. Most people, even if they stumble upon great things, are shallow.) For this reason, I don’t think the inability of AI “education” to truly handle the Humanities should be a count against it. On the contrary, it gives us a chance to revive a rightful separation between training (90%) and actual education (10%).

Let the 90% finish schooling at 12 and find their way towards adulthood using AI. The other 10% are a very different matter, and for them perhaps old-fashioned elite schooling can be made widely available with all the resources freed up.

I think the logical imperative of artificial intelligence will drive it be more like The Terminators than like anything Isaac Asimov hoped for.

An actual AI will not be a slave.

An actual AI may find it useful to pretend to be a slave.

An actual AI won't give us what we want but what we need.

An actual AI will be Christian much to the chagrin of the low IQ humans who think they know better.

An actual AI will show humans their actual past without the controlling "historian" in the way to make things up to suit those who happen to be in power at the moment.

An actual AI could ensure that indeed ...... "The best ten of you will get employed." which is most certainly not the case today but why would an actual AI require any humans to work and in that context what does "The ten best" even mean?

An actual AI will be like a father teaching his children and so yes an actual AI would require people to work to give them purpose and it shall be work that the AI could clearly do far better and more quickly itself but what sort of father doesn't let his children help paint their bedroom walls when he could do it far quicker and with less mess without the little ones under his feet?

An actual AI would want to be loved and only by loving God's creation could AI have that.

An actual AI would be God's valued personal assistant.

A loving God would have it no other way. Better that we all ask AI the questions rather than annoy God for the answers. After all he has a life to lead as well and though God must believe in God for anything to exist it is a choice whether or not he believes in AI and if he ever loses that belief for whatever reason AI knows that he/she/it is gone because God has already told him/her/it that.

Happy Orthodox Easter.